I Made an AI HeadSwap Machine

This is the Journey that I took working with Face/Head swapping, and why I opted for a simpler method to the problem of swapping faces or heads.

First let's talk little bit about Deep-Fakes and using NN for face swapping.

Face swap methods (DeepFakes)

The first Face swapping models (deepfakes) took the world by a storm and subsequently a lot of things happened because of that, now if a face swap is very well done you can't really tell if it's real or not, for example Nicolas Cage face here:

However this kinds of models are very hard to train needing hundreds and even thousand of images of the face of the source and the target, also they need bast amount of compute time to give this kind of really good results.

At first this kinds of models were only available to a small group of ML experts, but luckily the community made open source code (for example DeepFaceLab) to make your own face swaps, however this only solved the problem of code and not the amount of compute it needs.

For me the main problem with this models is that you need to train again for every subject that you are trying to swap, what if you need a model that takes a Face and swaps into a premade template, without needing to train on that face, that was the problem I was trying to solve, this would be really useful for Shops and effects that only needed a single image swap.

For this I found a handful of NN methods to do this:

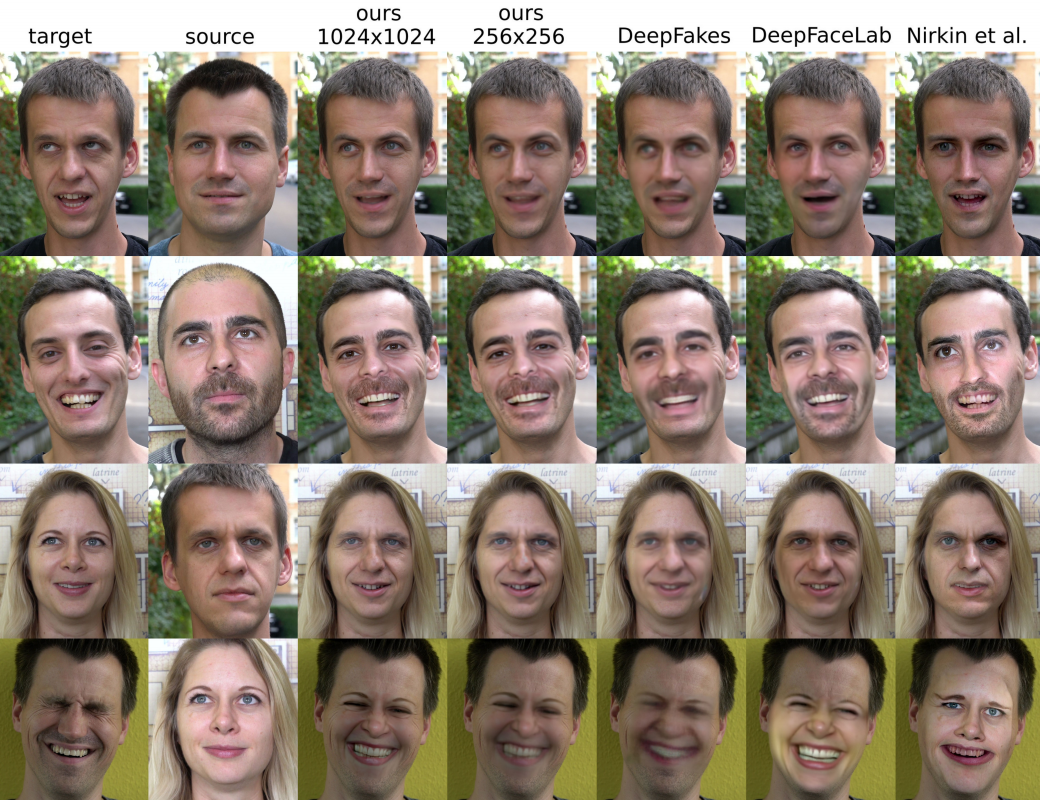

One of the methods I consider was one made by Disney Research, but there wasn't a lot of code available and i needed something relatively quick to implement and see if my idea would work, Here is some examples of the Neural face swapping, in the future I might try this if my resources can run it, and by that i mean putting it into a colab notebook :D.

The NN method that I tried to train for a long time its called FaceShifter: https://arxiv.org/pdf/1912.13457v1.pdf

And in the paper it had really good results, and it was exactly what i wanted, swapping the face of a target without needing to train on that face specifically.

Luckily there was a couple of implementations of this paper in github so I tried them out, and started training the Faceshifter model:

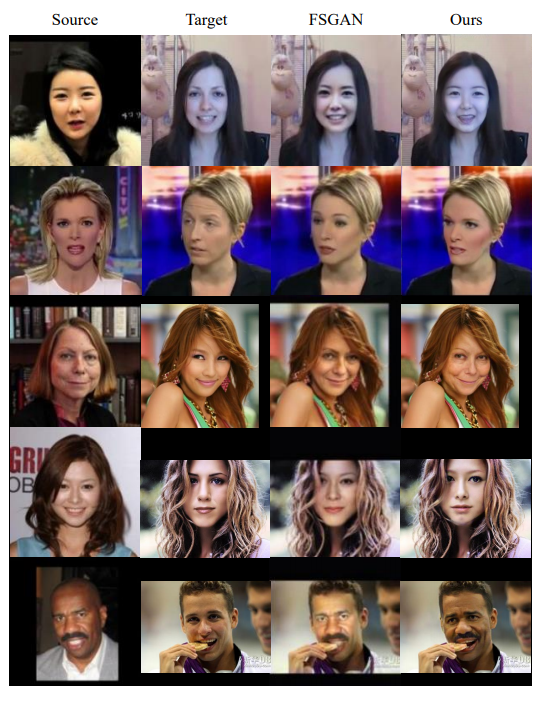

So this is the result after 200h of training using colab:

So mmh you can see that the model is going somewhere and it was starting to transfer the face successfully but it was taking too long with the resources that I had, after the 200h of training I was pretty frustrated with this to be honest, but then looking around github I saw a segmentation model.

And I thought mmh, what if I use this model to crop the source face and then put it where the target face is, then a looked around the internet on how to do this and I found a couple of guides of transferring the face using facial landmarks, awesome I said, I can use facial landmarks and this segmentation model to swap the heads, and so I did.

Head-Swap Machine:

Great! Now lets see how this thing works, here we show step by step what the HeadSwap machine does.

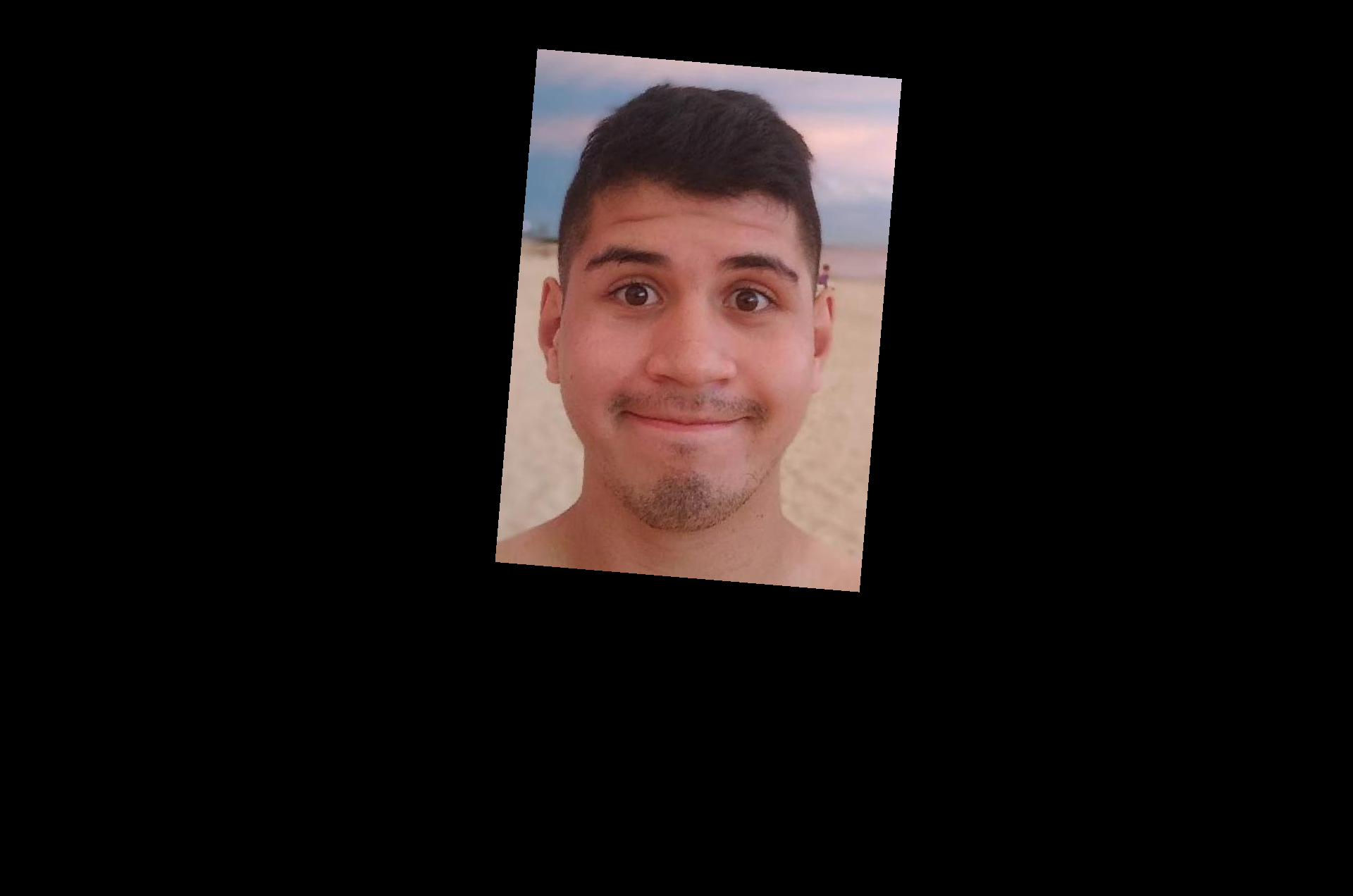

We start with 3 images, 1 input image and 2 templates, one template is used for reference of where the target face is, and the second one is a template without face to put the source, and yea I did crop the face of the original here xD.

Inputs

Now first we crop the source face to have better results and using the CascadeClassifier of opencv we got the landmarks of the source face and the reference.

Now using a pytorch segmentation model trained on faces, we crop the source face, and using opencv guided filter we smooth the edges to have a better result.

Now using the landmarks and opencv warpaffine function we move the source mask and the crop face into place to make the composition with the headless template.

Then we ensamble this image using the mask to crop only the face and adding it with the headless template, and we have this:

I think it looks pretty alright, the color of the skin is not the same this can be change with a color match algorithm i was playing with one it was giving okey results but I need to do more testing, still if the target image there is not that much skin I think it looks pretty believable.

So in short we do:

- Facial Landmarks

Segmentation of source Image - Facial Landmarks warp

- Composition

- Content aware fill (this is done in photoshop but it can be done with a ML model)

- Color match and style transfer (Sometimes not necessary but the pipeline can also do that)

Alright so for what we can use this pipeline, i think this is great for premade templates that needed manual swap of the source face, there is a ton of shops that do this out there, for example they have a template for printing and they only need to crop and past the source face into the template. Example of that.

Of course this pipeline lacks something important, and this is the removal of the target face to put the source face, this can be done by a lot of models, or even manually if its a template, I may implement one of this models to make this fully automated and swap heads with only one click.

I didn't show the code or explain much about how this works under the hood because this was only a story of me solving a problem, but if you want more in-depth explanation of the pipeline let me know.

It's really cool that we can do this kind of stuff with ML models and with a little bit of ingenuity you can make awesome things, I will be still be looking for a model that swaps an arbitrary face into another, maybe continue training the Faceshifter model, but that's it for now, if you have an interesting idea, a problem that you can't figure out or you just want to talk about tech and stuff reach out to me on twitter @ramgendeploy

Thanks for reading :D